The “eyes and ears” of SeaClear robotic platform, aka the observation ROV, has been going through a development phase in order to accurately perceive its surrounding environment. Different computer vision tasks were examined such as instance segmentation and object detection in order to select the one that best satisfies the SeaClear requirements and limitations in terms of accuracy and computational cost. Similarly to all neural network architectures, convolutional neural networks (CNNs) that are employed in this computer vision task require big amounts of data. Hence, additional data have been collected during the SeaClear pilot tests, which were afterwards processed and labelled. In total, we have generated 8610 labelled image samples by the measurements conducted during the SeaClear pilot.

After generating a sufficiently large dataset, different convolutional neural network architectures have been examined. We have explored both two-stage and one-stage CNNs showing promising results. Two-stage CNNs generate regions of interest and use these region proposals to perform object classification and bounding box regression. In contrast, one-stage CNNs perform object detection in one stage, ensuring much faster inference.

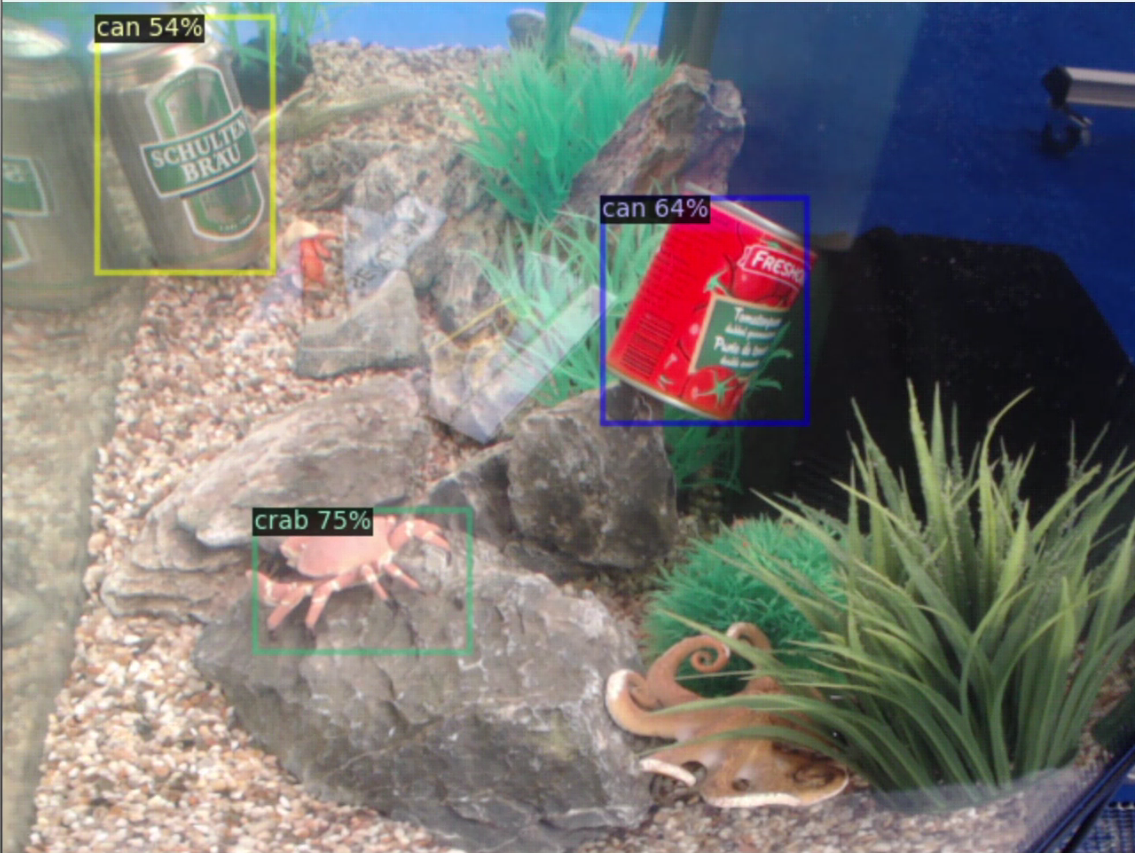

Classification example in a controlled environment

Classification example in a controlled environment

Next, we want to focus on optimizing these CNN architectures and testing them in real time. Stay tuned for more updates to come.